Purpose – Document AI Resources

In this post, I will describe the AI training resources that I am using in my quest to catch up with the current state of the art. My goal is to document the resources that I used for my own reference and to benefit others while explaining my reasoning along the way.

After studying AI back in the 1980s, I have not kept my AI skills up to date until recently when I started learning about newer things like ChatGPT. AI is in the news and every technical person should learn something about it for their own understanding and to help their career. Because I had a good overall understanding of the state of AI years ago, I think of it as “catching up” with the present-day standard. So, my approach makes sense for me given my history. But even for people who are not as old as I am or who don’t have the AI experience from their past the resources below could still be useful because of their overall quality.

Revisit AI Overview – 6.034

The first thing I did was watch the lecture videos for Patrick Winston‘s 2010 MIT 6.034 class. I had used Winston’s textbook in my college AI class in the 1980s. So, I thought that a class taught my Winston might follow a similar outline to what I once knew about AI and help jog my memory and catch me up with what has changed in the last 30+ years. Just watching the lecture videos and not doing the homework and reading limited how much I got from the class, but it was a great high-level overview of the different areas of AI. Also, since it was from 2010 it was good for me because it was closer to present day than my 1980s education, but 14 years away from the current ChatGPT hoopla. One very insightful part of the lecture videos is lectures 12A and 12B which are about neural nets and deep neural nets. Evidently in 2010 Professor Winston said that neural nets were not that promising or could not do that much. Fast forward 5 years and he revised that lecture with two more modern views on neural nets. Of course, ChatGPT is based on neural nets as are many other useful AI things today. After reviewing the 6.034 lecture videos I was on the lookout for a more in depth, hands on, class as the next step in my journey.

edX Class With Python – 6.86x

I had talked with ChatGPT about where to go next in my AI journey and it made various suggestions including books and web sites. I also searched around using Google. Then I noticed an edX class about AI called “Machine Learning with Python-From Linear Models to Deep Learning,” or “6.86x”. I was excited when I saw that edX had a useful Python-based AI class. I had a great time with the two earlier Python edX classes which I took in 2015. They were:

- 6.00.1x – Introduction to Computer Science and Programming Using Python

- 6.00.2x – Introduction to Computational Thinking and Data Science

This blog has several posts about how I used the material in those classes for my work. I have written many Python scripts to support my Oracle database work including my PythonDBAGraphs scripts for graphing Oracle performance metrics. I have gotten a lot of value from learning Python and libraries like Matplotlib in those free edX classes. So, when I saw this machine learning class with Python, I jumped at the chance to join it with the hope that it would have hands-on Python programming with libraries that I could use in my regular work just as the 2015 classes had.

Math Prerequisites

I almost didn’t take 6.86x because the prerequisites included vector calculus and linear algebra which are advanced areas of math. I may have taken these classes decades ago, but I haven’t used them since. I was afraid that I might be overwhelmed and not able to follow the class. But since I was auditing the class for free, I felt like I could “cheat” as much or as little as I wanted because the score in the class doesn’t count for anything. I used my favorite algebra system, Maxima, when it was too hard to do the math by hand. I had some very interesting conversations with ChatGPT about things I didn’t understand. My initial fears about the math were unfounded. The class helped you with the math as much as possible to make it easier to follow. And, at the end of the day since I wasn’t taking this class for any kind of credit, the points don’t matter. (like the Drew Carey improv show). What matters was that I got something out of it. I finished the class this weekend and although the math was hard at times I was able to get through it.

How to Finish Catching Up to 2024

It looks like 6.86x is 4-5 years old so it caught me up to 2019. I have been thinking about where to go from here to get all the way to present day. Working on this class I found two useful Python resources that I want to pursue more:

- PyTorch – Neural Nets

- Hugging Face – Large Language Models

Neural Nets with PyTorch

I walked away from both 6.034 and 6.86x realizing how important neural nets are to AI today and so I want to dig deeper into PyTorch which is a top Python library for neural nets originally developed by Facebook. With the importance of neural nets and the experience I got with PyTorch from the class it only makes sense to dig deeper into PyTorch as a tool that I can use in my database work. As I understand it Large Language Models such as those behind ChatGPT are built using tools like PyTorch. Plus, neural nets have many other applications outside of LLMs. I hope to find uses for PyTorch in my database work and post about them here.

Large Language Models with Hugging Face

Everyone is talking about ChatGPT and LLMs today. I ran across the Hugging Face site during my class. I can’t recall if the class used any of the models there or if I just ran across them as I was researching things. I would really like to download some of the models there and play with updating them and using them. I have played with LLM’s before but have not gotten very far. I tried out OpenAI’s API programming doing completions. I played with storing vectors in MongoDB. But I didn’t have the background that I have now from 6.86x to understand what I was working with. If I can find the time, I would like to both play with the models from Hugging Face and revisit my earlier experiments with OpenAI and MongoDB. Also, I think Oracle 23ai has vectors so I might try it out. But one thing at a time! First, I really want to dig into PyTorch and then mess with Hugging Face’s downloadable models.

Recap

I introduced several resources for learning about artificial intelligence. MIT’s OCW class from 2010, 6.034, has lecture videos from a well-known AI pioneer. 6.86x is a full-blown online machine learning class with Python that you can audit for free or take for credit. Python library PyTorch provides cutting edge neural net functionality. Hugging Face lets you download a wide variety of large language models. These are some great resources to propel me on my way to catching up with the present-day state of the art in AI, and I hope that they will help others in their own pursuits of AI understanding.

Bobby

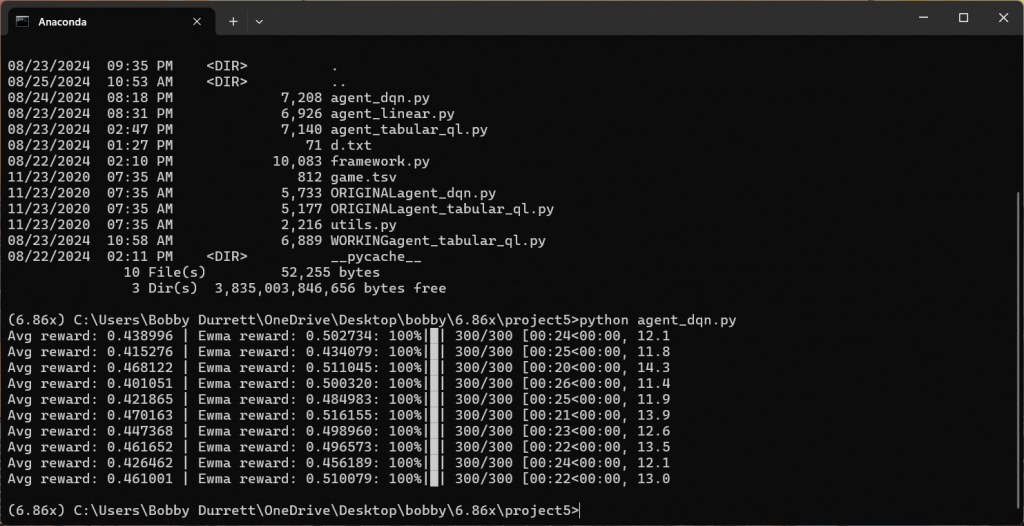

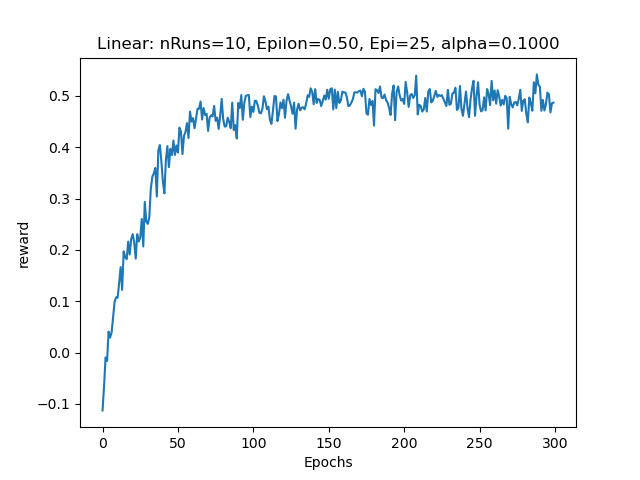

P.S. Here are a couple of fun screenshots from the last project in my 6.86x class. Hopefully it won’t give too much away to future students.

This is a typical run using PyTorch to train a model for the last assignment.

This is the graphical output showing when the training converged on the desired output.

Pingback: Using PyTorch to Predict Host CPU from Date/Time | Bobby Durrett's DBA Blog

Pingback: What I Learned About Machine Learning – Don’t Use It! | Bobby Durrett's DBA Blog